Everyone should install vscode+some LLMs, writing macros/addons just got easier. "mm->anki"

Hi,

in mid 2023, when there is only web Chatgpt 4 i copy/pasted many rounds of code to write

the mindmanager to anki macro script.

now, with vscode, you dont need copy/paste, the LLM directly read your script files on the disk;

it provide "snapshots" thru git (git commits).

you got better "engines" i.e. claude code cli or gemni cli that give you the ORIGINAL context window (somehow like the RAM size of the LLM), instead of copilot or cursor's minimal quota.

here is my V2 of mm2ak script. Previously, it's only QnA type, now (indeep just

refer the LLM to "obsidian to anki", it can grab the engine/logic for you) it support cloze too.

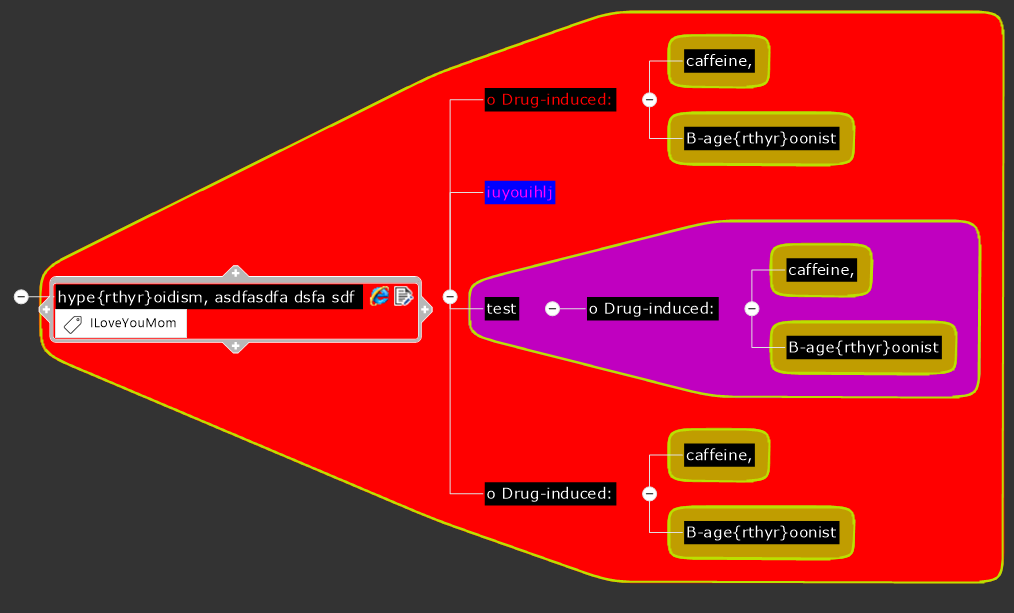

mindmap:

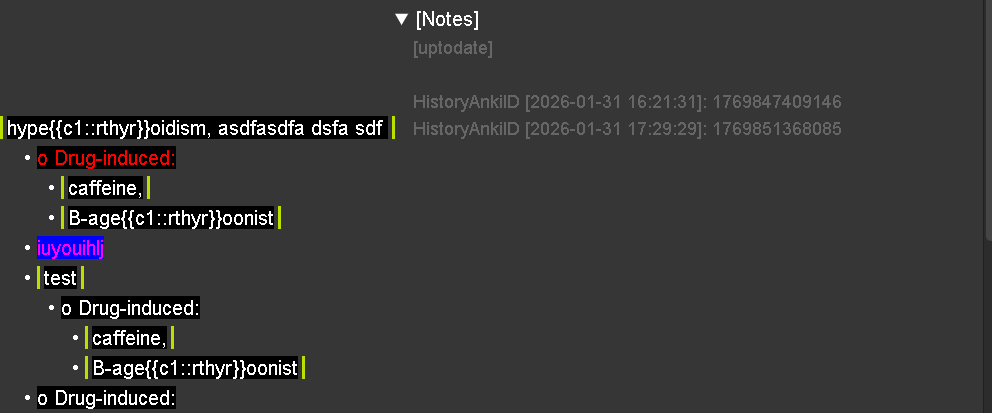

ankicard:

even with font color/background; boundaries(working)

yo~~

the topic notes could be write into COLLAPSABLE HTML tags!

so i further solved the [reference name] problem.

now i can have the reference name in end of topic text, or in topic notes,

and i got a macro to switch between them AUTOMATICALLY~

i cant imagine this years ago.

I like this idea

I like this idea

ps

"obsidian to anki" is an obsidian plugin that do same thing, but for obsidian.

And, you can refer LLM to it, to learn the idea, the algorhythm.

ask it to grab the logic and use in ur script!

"stand on the shoulder of the giants"

ps

"obsidian to anki" is an obsidian plugin that do same thing, but for obsidian.

And, you can refer LLM to it, to learn the idea, the algorhythm.

ask it to grab the logic and use in ur script!

"stand on the shoulder of the giants"

very strange, claude, gpt, grok, gemini all sorts of western AI, when write MM macros,

almost always have errors/wrong syntax.

my quotas ran out, so i HAVE to use GLM 4.7.

WTF, i programmed for half day, ALL run without a single report of error!

pls, i am talking the truth, i am not promoting, although i have to tell that i am from 3rd world,

that GLM 4.7 is more economic for us. (although i also got ALL those westerners' LLMs)

I just want to share to you guys and make the mindmanager macro community better.

@Nick if you tried those westerner's LLMs before and always have error reported,

I sincerely recommend GLM 4.7.

i really really cant explain why.

2years ago, GPT/Claude already helped me to turn mindmap into anki cards,

and already introduced folding using HTML summary/details (anki itself have ,but it's almost impossible to use by human hand)

today in the v2.0+ of my mindmanager to anki, i just found writing macros using IDE be more smoother, we are more empowered.

thank you

ps, for 10+yr i deadly want such addon, and how can a student pay a programmer to write an addon?

i got laughed by others.

today, i use paid LLMs, but their free quota likely would be marginally enough.

thanks

very strange, claude, gpt, grok, gemini all sorts of western AI, when write MM macros,

almost always have errors/wrong syntax.

my quotas ran out, so i HAVE to use GLM 4.7.

WTF, i programmed for half day, ALL run without a single report of error!

pls, i am talking the truth, i am not promoting, although i have to tell that i am from 3rd world,

that GLM 4.7 is more economic for us. (although i also got ALL those westerners' LLMs)

I just want to share to you guys and make the mindmanager macro community better.

@Nick if you tried those westerner's LLMs before and always have error reported,

I sincerely recommend GLM 4.7.

i really really cant explain why.

2years ago, GPT/Claude already helped me to turn mindmap into anki cards,

and already introduced folding using HTML summary/details (anki itself have ,but it's almost impossible to use by human hand)

today in the v2.0+ of my mindmanager to anki, i just found writing macros using IDE be more smoother, we are more empowered.

thank you

ps, for 10+yr i deadly want such addon, and how can a student pay a programmer to write an addon?

i got laughed by others.

today, i use paid LLMs, but their free quota likely would be marginally enough.

thanks

but i must say, GLM 4.7 wrote the special function,

but it didn't ask the old main program to use it.

so for me layman i send gemini the .mmbas and the log file,

gemini studied the code and told me.

so may be gemini (or other westerner's LLM) as brain, GLM 4.7 as worker.

may be GLM 4.7 have crawed some winwrap material used for training.

but i must say, GLM 4.7 wrote the special function,

but it didn't ask the old main program to use it.

so for me layman i send gemini the .mmbas and the log file,

gemini studied the code and told me.

so may be gemini (or other westerner's LLM) as brain, GLM 4.7 as worker.

may be GLM 4.7 have crawed some winwrap material used for training.

---