My lobster (Autoclaw) now can control mindmanager thru COM/REST API

Openclaw+LLM-------network--->Mindm-20ultra(python.py)----(stdio)------>mindmanager2020

Indeed i ran my Autoclaw inside sandboxie plus to protect myself.

Title: mindm_ultra — Native Windows COM Bridge for MindManager 20 (REST API)

Body:

mindm is great — cross-platform, well-designed. But on Windows, where MindManager lives natively, I wanted something that talks directly to the COM API with zero translation overhead.

So I built mindm_ultra: a thin Python wrapper around MindManager's native COM object model. No intermediate layer, no schema translation — just pywin32 → COM → MindManager, on 127.0.0.1:5001.

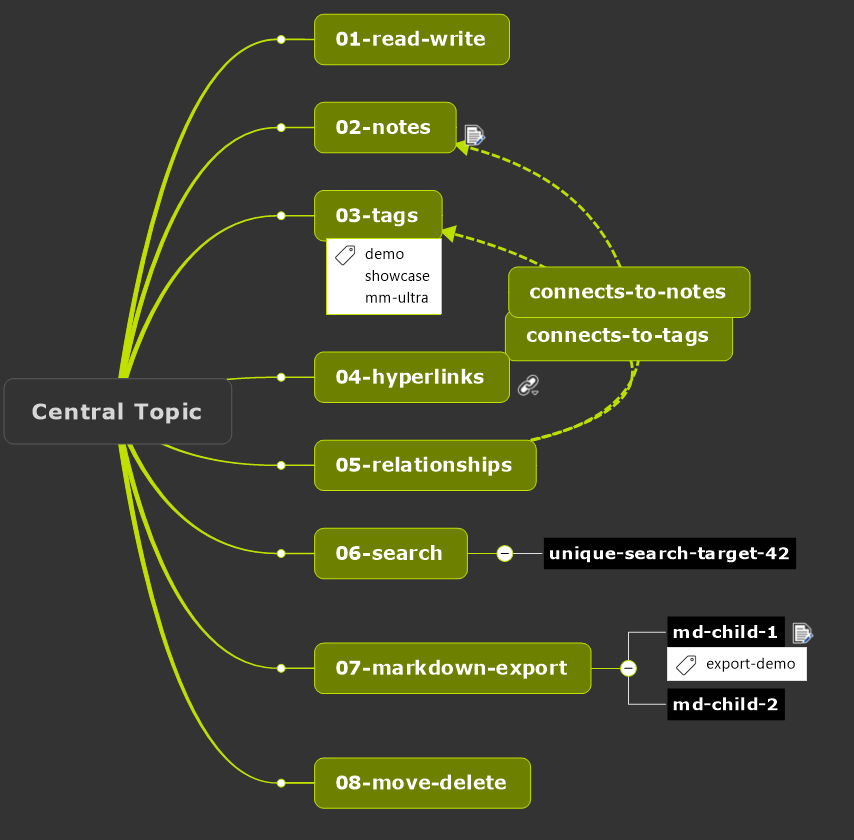

17 endpoints, UUID token auth:

GET /topic?guid=X Read topic + all metadata

GET /topic/children?guid=X Direct children

GET /map?depth=N Full recursive tree

GET /search?q=text Case-insensitive substring search

GET /selection Currently selected topic

GET /export/markdown?extra=... Toggleable metadata (guid, notes, tags, hyperlinks)

POST /topic Create subtopic

POST /topic/tag Add text label

POST /topic/hyperlink URL or topic-to-topic (preserves #oid for bi-linking)

POST /relationship Relationship line with label

PUT /topic Rename

PUT /topic/notes Rich text notes

PUT /topic/move Reparent

DELETE /topic Delete + subtree

DELETE /topic/tag Remove tag

DELETE /relationship Remove relationship

Why it's fast on Windows:

- Direct COM — no cross-platform abstraction, no Mermaid generation overhead

- Traversal-based lookup returns real Topic objects, not DocumentObject wrappers. Hyperlinks, TextLabels, etc. all work natively.

- Markdown export is a single recursive traversal with toggleable ####extra blocks for metadata

- Able to export in e.g. markdown, can control level of detail

Not trying to replace mindm — just the fastest path when you're on Windows and want raw COM fidelity. Feedback welcome.

I like this idea

I like this idea

---